All Models Are Wrong

We can only hope to create an attribution algorithm that is suggestive and directional, to create a model that helps us test theories, and to create a model that is useful.

We can only hope to create an attribution algorithm that is suggestive and directional, to create a model that helps us test theories, and to create a model that is useful.

When I was four years old, my brother and I got a Lionel train set for Christmas. It was pure awesome. My father wanted to play with it with us and we loved that too. We played with it for years and always brought it out just before Christmas. It fueled our imaginations.

When I was four years old, my brother and I got a Lionel train set for Christmas. It was pure awesome. My father wanted to play with it with us and we loved that too. We played with it for years and always brought it out just before Christmas. It fueled our imaginations.

It was electric. It was detailed. It was a scale model. That meant that, while you could really launch a helicopter from a flatbed car, you couldn’t ride it. It was just a model.

When I was nine years old, we went to the New York World’s Fair and were dumbfounded by General Motors’ Futurama exhibit with its Lunar Colony, Undersea City, and City of the Future.

When I was nine years old, we went to the New York World’s Fair and were dumbfounded by General Motors’ Futurama exhibit with its Lunar Colony, Undersea City, and City of the Future.

By the time I was old enough to go places by myself, this stuff would have come true!

But for now, it was only an imagined place. It was just a model.

When I went to college, I gave serious thought to architecture. I liked the idea of designing something from scratch; something permanent; something I could point at and say, “I made that.”

I had to admit that what I really wanted was to build those astounding, perfect, intricate, 3D cardboard mock-ups of what the building would look like. They would have tiny trees and little cars and people the size of pencil erasers.

I had to admit that what I really wanted was to build those astounding, perfect, intricate, 3D cardboard mock-ups of what the building would look like. They would have tiny trees and little cars and people the size of pencil erasers.

They would be elaborate and complex and they would completely communicate a vision. They would spell out everything anybody needed to know about how I saw the future.

They would be visually engrossing, but they would lack the information necessary to actually build the thing. You couldn’t really open the doors and windows. It was just a model.

Accountants build accounting systems that show how money flows into, out of, and between businesses. Website builders rely on information architecture designers who create a map of how people might expect to find what they are looking for and accomplish a specific task.

Because (as David Weinberger likes to say) the world is messy, analog, and open to interpretation, these models only work well enough to get by. Using any software or website, we quickly come upon circumstances that were simply not preconceived well enough to accommodate a certain situation and we realize it’s not really the way the world works, it’s just a model.

On March 28 of this year, George Edward Pelham Box passed away. He was a prolific author about all things statistical and holds an honored place in my heart for his observation that, “All models are wrong, but some are useful.”

Where marketing meets big data, there is an irrational expectation that we can collect enough information that it will auto-magically correlate and causality will drop out the bottom. This is hype to the highest order.

We are never going to be able to build a detailed model that describes and then predicts what humans do. Even with all of the behavioral, transactional, and attitudinal data in the world, humans are just too messy, analog, and open to too many interpretations.

Even as we make progress predicting the movement of swarming insects, fish, and cancer cells (Wired Magazine, “The Power of Swarms“), we can only hope to create an attribution algorithm that is suggestive and directional. We can only hope to create a model that helps us test theories. We can only hope to create a model that is useful.

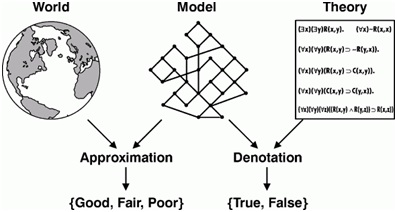

Source: http://www.jfsowa.com/ontology/causal.htm

For all of the data we collect, for all of the data storage we can fill, and for all of the big data systems we can cluster in the cloud, our constructs are not going to provide facts, unearth hard and fast rules, or give us ironclad assurance that our next step will result in specific returns on our investment.

They are only models.

But some are useful.

Model Steam Train and Small Buildings Model images via Shutterstock.

Leave a Reply

You must be logged in to post a comment.