Better Than Finger-Cooking: Ad Testing Tool Use for the Reluctant Artisan

If you're involved in ad testing, give these simple statistical calculators a try and ensure you have the familiarity with mathematical tools and foundations to succeed.

If you're involved in ad testing, give these simple statistical calculators a try and ensure you have the familiarity with mathematical tools and foundations to succeed.

Without even knowing it, all PPC advertisers are in the business of using predictive models to improve response rates. The barriers to entering the field are so low, though, that we may take its awe-inspiring power for granted.

Without even knowing it, all PPC advertisers are in the business of using predictive models to improve response rates. The barriers to entering the field are so low, though, that we may take its awe-inspiring power for granted.

Hundreds of thousands of us are taking on complex experiments that used to be restricted to a small number of top direct mail marketers. Yet we’re casually taking on much more volume of testing than those mad geniuses ever did, given that our testing tools and response channels have grown exponentially more nimble and powerful. Create an ad in seconds; it’s showing to high-intent searchers minutes later.

Predictive models? Exponentially more powerful?

Uh-oh.

Nassim Taleb recently observed: “I am afraid to conclude that the only form of stable society, outside of the hunter-gatherer environment, & one that does not blow up, is an artisanal one. Complexification drives institutions –and societies — to maximal fragility.”

So in related news, I’m still running a PPC agency. That’s vaguely artisanal, despite the scientific nature of the analysis we undertake constantly. Fortunately, it is also “stable.”

While Big Data has a sexy ring to it, it’s worth heeding Taleb’s warning: large, top-down systems sometimes fail catastrophically, especially when their designers have no “skin in the game.” The smaller scale of our localized, owner-driven “greed is good” testing efforts may indeed be more robust than trusting outsiders with our fate. Lay out a simple set of goals, cultivate a modest level of greed; then “cherry-pick” whatever data sources, powerful software, etc., are essential to the enterprise (no more, no less).

Make no mistake, though. We tend to overestimate our own “off the cuff” efforts. Indeed, we tend to overestimate the value of new experiments and tests, a common mistake in the social sciences that seems to spurn a more informed “Bayesian” approach to statistical learning. We need to be as rigorous as possible, or quite frankly, Big Data is going to make us look silly.

So to ensure that we remain robustly skilled, everyone should gain enough familiarity with the math underlying various tools. If you do something called “testing,” why not sample a few simple statistical calculators hands-on, to build up your own intuitive understanding of what types of results typically meet your winning criteria? I like playing with these tools the same way my Dad enjoys tying flies to go fly fishing, and the way my wife gleefully acquires various low-tech contraptions that can extract the juice from a lemon.

So, today we’re talking about the impact of ad creative. This seems almost quaint in a world of behavioral advertising and programmatic adtech, where the intent of a given user may increasingly be known. The effort of looking at the impact of different versions of ad creative is “keyword advertising classic.” It refers to the attempt to immediately (or within 30 days or so) convert to a sale or valued action a user who signaled high intent with a keyword search. High intent, but not extremely high as with some forms of behavioral advertising. One day, “keyword advertising classic” may wane in importance. For now, campaigns live and die on the quality of this creative.

The matter-of-factness of the quotidian vernacular in today’s “For Dummies” world of digital marketing implies that there is a simple set of best practices that just about anyone should be able to master with the right “training.”

But is any of that training in statistics? How valid are our judgments?

Marketing happens every day, of course, without benefit of graduate-level training in statistics. We don’t necessarily have to devote our lives to that kind of training, if all we really need to do is to improve our skill at poking the box.

Ad testing is the ultimate poke-the-box thrill. Everyone is going to develop their own unique standards and tactics in this area. Many times, it’s no great mystery as to what happened: the most significant difference was in something major like the first word in the headline, or the mention of a shipping offer. Many of you have been doing this for five or ten years or more, and are pretty good at it.

But when was the last time you actually ran your tests through a statistical significance calculator? Here are a few statistical significance calculators you can easily come across online, and easily modify to suit your chosen KPI. I don’t recommend one over another; they all do about the same thing.

Sitting down and really doing the calculations is no trivial exercise. When you dig a bit, it’s evident that most advertisers are all over the map when it comes to their testing methodology – if they even have one. I can’t tell you how many times I’ve had this kind of conversation with a marketer. I’ll discover that an individual has not fully decided for herself which KPI to tie decisions to. “Oh, of course we look solely at an ROI measure such as CPA,” she’ll say proudly. And then, just as quickly, the story changes to a different KPI, like CTR. There is no consistency to the approach.

The likely result is simple enough. Winning ads don’t get chosen. Statistical anomalies get chosen over proven winners. Mistakes are made. ROI suffers.

Beware of several outside influences that can render statistical analysis misleading inside PPC platforms.

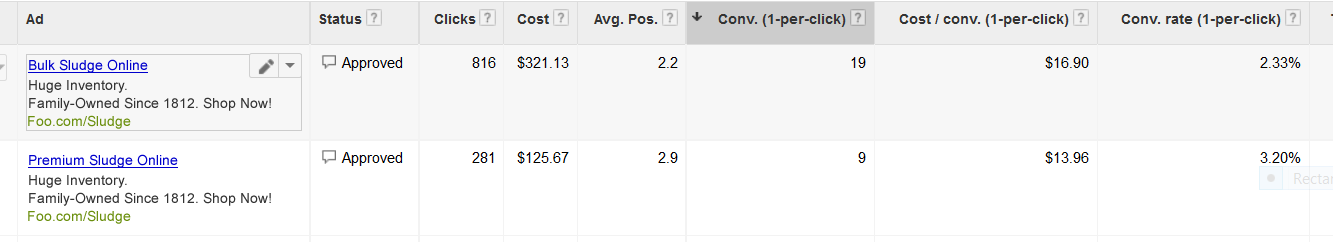

Onto a couple of brief examples. But above all, go ahead and walk through this process yourself, by using one of these clunky-looking yet timeless tools. A statistical significance calculator is most accurate for the most fairly-structured tests (same date ranges, even delivery, etc.). But it can also be a helpful yardstick to help you develop more understanding of special situations.

It’s often difficult to know if a new ad is truly winning or not, despite a big lead. In this case, where the upstart ad seems to have a great chance to beat the incumbent, we discover that it has reached only 77 percent confidence. Does that mean it’s just a matter of waiting before it inevitably wins, once enough volume is generated?

Nope. That confidence level suggests that there’s a pretty good chance that this early promise will decay (via the timeless pull of regression toward the mean) and the incumbent ad will remain champion. If the challenger does reach 95 percent or 99 percent confidence level, that will be quite a feat indeed. Beating a strong incumbent ad isn’t easy.

Another unusual situation that can warrant turning to your trusty stats calculator: deliberately testing more versions of ad creative than usual in a high-volume ad group. This certainly looks impressive, because “it’s a lot of testing,” but are you overestimating how many ad versions are practical for testing?

With a large jumble of ambiguous data, the natural tendency is to pick winners too soon. That’s often close to pure guessing. Either run the test to a solid conclusion, or structure higher-impact tests with fewer ad versions. In one such test, my control ad boasts a 6.55 percent conversion rate, while the next best of six remaining ads is only at 5.61 percent. Yet the incumbent’s winning status is only pegged at a 73 percent confidence level. The last-place ad in the group, at a 4.23 percent conversion rate, comes with a wrinkle: it scores by far the highest average order value (AOV). And its Conversion Value/Cost (ROAS) leads all contenders, at 6.0!

AOV tends to regress to the mean. But in this particular case, where the “high AOV” ad creative was deliberately engineered to attract higher volume purchasers, one would be a fool to give up on an ad that had staked an early lead on a revenue basis despite losing on conversion rate.

Ad testing is nothing if not complex!

The more of these statistical checks you observe directly, the more comfortable you’ll be as a judge of patterns. To be sure, you may only be working in one obscure corner of the industry, and your efforts might not be officially sanctioned by the Keepers of the Biggest Data. Even more important, then, that you’re making significant and confident strides when going it alone, with a deep understanding of the principles involved.

There are a number of ways you could automate this process, or better integrate it with AdWords and your workflow. For example, you could create a custom AdWords script that would alert you by email when any ad test has reached a certain statistical confidence level. Or you could funnel some of your workflow (new ad setup) through AdWords Campaign Experiments (ACE), which helpfully displays statistical significance notations (in the form of one, two, or three tiny arrows) next to all KPI’s relevant to an experiment.

Let’s face it, there are probably easier paths you could take, like setting your ad test rotation settings on Google-led autopilot. But that’s easier in the same sense that you can order a pizza seven nights a week, and eat those leftovers for breakfast seven mornings a week, and probably not die. (For elaboration, see “Finger Cooking With Bill,” by Boston Pizza.)

There is “finger cooking,” and then there is actual cooking. Like learning to chop garlic and marinate the chicken. Like all hands-on pursuits, this has to be good for the soul. In a world grown soft, it’s important every so often to learn how something works.

Next time: how to get to the grocery store when your GPS isn’t working.

Title and homepage images courtesy of Shutterstock

Leave a Reply

You must be logged in to post a comment.